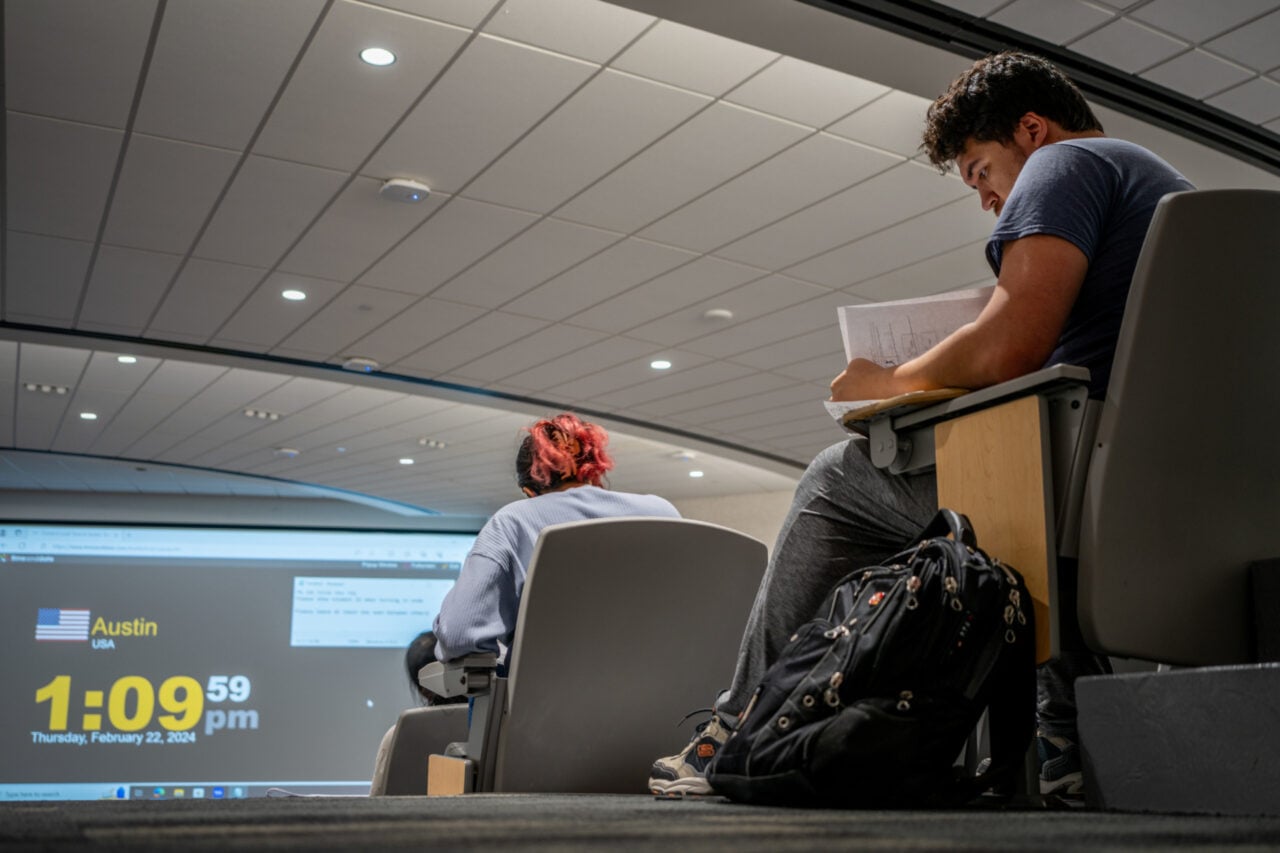

AI Is Inflating Grades and Eroding Learning, Study Finds

New research suggests students are earning higher marks while retaining less, raising the stakes for how schools deploy generative tools.

What matters

- A study reported by Gizmodo claims students using generative AI earn higher grades while retaining less material.

- The initial report lacks methodological detail, and independent verification of the study has not yet appeared in available coverage.

- Universities remain divided on AI policy, with no consensus on whether to ban, regulate, or integrate generative tools into coursework.

- If grades become decoupled from actual skill, workforce readiness could suffer and employers may lose trust in academic credentials.

What happened

On May 15, Gizmodo reported on a new study finding that students who use generative AI are earning higher grades while actually learning less. The article frames the dynamic as a decoupling of performance from comprehension: assignments receive better marks even as students retain less of the underlying material. The initial report did not include details about the study’s methodology, sample size, or institutional affiliation, and the underlying paper had not been independently verified in available coverage at press time. Still, the claim resonates with anecdotal reports from instructors who say AI-generated essays and problem sets often look polished but collapse under basic follow-up questioning.

Why it matters

The findings land at a precarious moment for academic policy. Three years into the generative-AI era, universities remain divided on how to respond. Some institutions have embraced AI literacy as a core competency; others have banned large language models outright in certain courses. The result is a patchwork of rules that leaves both students and employers uncertain about what a grade actually represents.

If higher marks are indeed masking weaker mastery, the threat goes beyond cheating. Degrees and GPAs function as labor-market signals—proxies for critical thinking, writing ability, and domain knowledge. Should those signals become inflated by AI assistance, employers may face a wave of credential inflation without a corresponding rise in skill. That gap could force companies to build their own assessments or devalue traditional academic credentials entirely. For students, the short-term benefit of an easier A may carry a long-term cost in workforce readiness.

Public reaction

No strong public signal was available in community discussions at the time of this report.

What to watch

The immediate priority is the underlying study itself. Look for the full paper, its peer-review status, sample demographics, and whether the results hold across disciplines—from humanities essays to STEM problem sets. Independent replication will be essential; early education-AI research has often suffered from small samples and short time horizons.

Beyond the data, watch for shifts in assessment design. Some professors are already moving toward oral exams, in-class proctored work, and process-oriented grading that values drafts and revision history over final output. Accreditation bodies and hiring managers may also begin demanding stronger proof of skill, potentially accelerating interest in alternative credentials, portfolios, and verified competency tests. The next academic year will likely be a proving ground for whether higher education can restore the link between a grade and genuine understanding.

Sources

- Gizmodo, "Students Are Learning Less and Getting Higher Grades Because of AI, Study Finds," May 15, 2026. https://gizmodo.com/students-are-learning-less-and-getting-higher-grades-because-of-ai-study-finds-2000758844

Public reaction

No strong public signal was available in community discussions at the time of this report.

Open questions

- How should educators measure learning when students use generative AI for coursework?

- Can assessment design evolve quickly enough to maintain the integrity of academic credentials?

- Will employers begin to discount traditional degrees if GPA inflation outpaces skill acquisition?

What to do next

Developers

Build evaluation metrics that test comprehension, not just task completion, when integrating AI into educational or productivity tools.

The study suggests output quality and actual learning can diverge; tools need to measure what users retain.

Founders

Look for opportunities in assessment and verification tools that certify human learning rather than automate answers.

If grades become decoupled from skills, demand will grow for trusted signals of genuine competence.

PMs

Introduce friction or review steps in AI features to prevent users from outsourcing critical thinking entirely.

Higher grades with less learning indicate that seamless automation can undermine the user’s own development.

Investors

Scrutinize ed-tech and HR-tech startups for how they validate skill acquisition.

Credential inflation may soon become model inflation; due diligence should stress-test assessment validity.

Operators

Audit internal upskilling programs to ensure AI assistance augments rather than replaces employee learning.

Workforce readiness depends on whether staff actually develop skills or merely generate outputs.

Testing notes

Caveats

- This story reports on a research finding and societal trend rather than a shipping product, model release, or developer tool.

- Readers cannot directly test the study's conclusions; they can only await the underlying paper and subsequent replication studies.